Mis à jour le Mercredi, 11 Septembre 2019

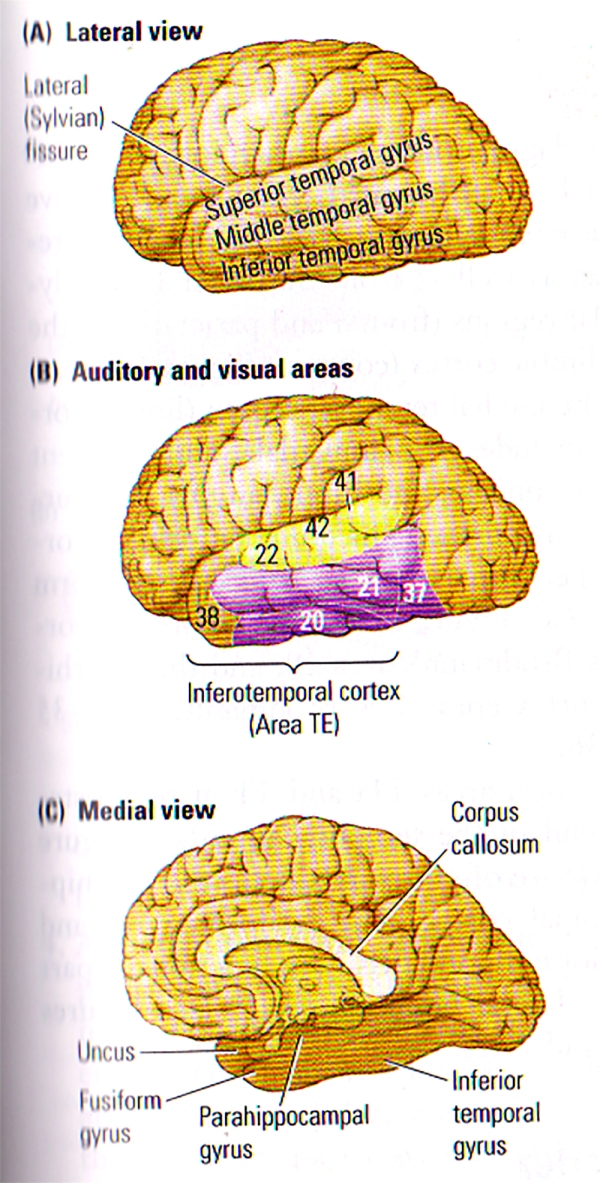

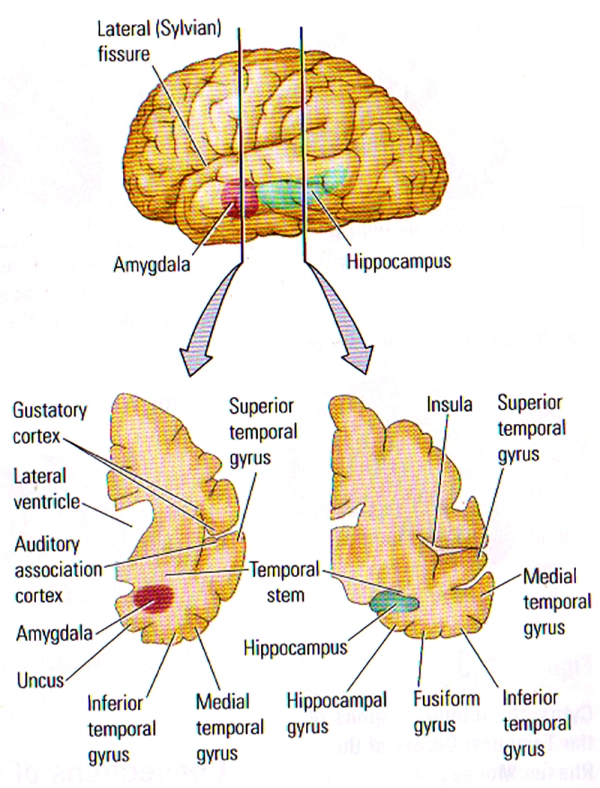

The temporal lobe consists of all the tissues located underneath the lateral (Sylvian) fissure and anterior to the occipital cortex (FIGURE A). The subcortical temporal lobe structures include the limbic cortex, the amygdala, and the hippocampal formation (FIGURE B). The connections to and from the temporal lobe extend to all areas of the brain. Typical symptoms of temporal-lobe disorder or damage generally include drastic deficits in affect and personality, memory problems, and some form of deficits of language.

FIGURE A. Anatomy of the Temporal Lobe | (A) The 3 Major gyri visible on the lateral surface of the temporal lobe. (B) Brodmann’s cytoarchitectonic zones on the lateral surface. Auditory areas are shown in yellow and visual areas in purple. Areas 20, 21, 37 and 38 are often referred to by von Economo’s designation, TE. (C) The gyri visible on a medial view of the temporal lobe. The uncus refers to the anterior extension of the hippocampal formation. The parahippocampal gyrus includes areas TF and TH.

FIGURE B. Internal Structure of the Temporal Lobe | (TOP) Lateral View of the left hemisphere showing the positions of the amygdala and the hippocampus buried deeply in the temporal lobe. The vertical lines show the approximate location of the coronal sections in the bottom illustration. (BOTTOM) Frontal views through the left hemisphere illustrating the cortical and subcortical regions of the temporal lobe.

Subdivisions of the Temporal Cortex

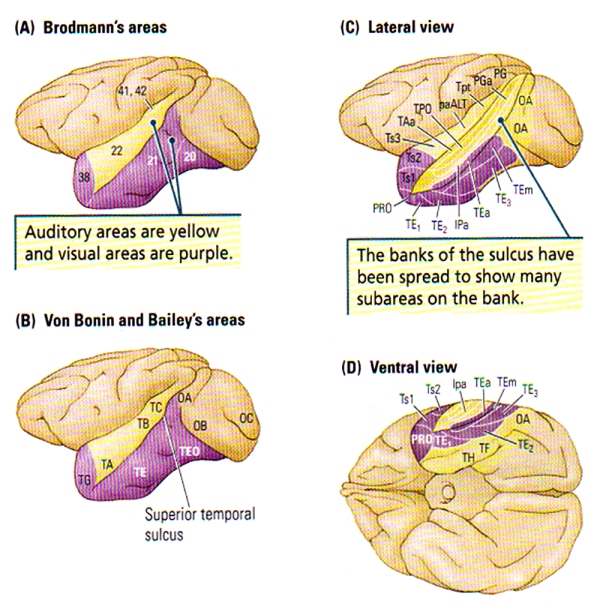

10 temporal areas were identified by Brodman, however many more have recently been discovered in monkeys, and this finding suggests that humans too may have many more areas to explore. The temporal areas on the lateral surface can be divided into those that are auditory (FIG. A. (B), Brodman areas 41, 42 and 22) and those that make up the Ventral Visual Stream on the lateral temporal lobe (FIG. A. (B), areas 20, 21, 37 & 38). These regions specific to vision are often referred to as the Inferotemporal Cortex or by von Economo’s designation, TE.

FIGURE C. Cytoarchitectonic Regions of the Temporal Cortex of the Rhesus Monkey | (A) Brodmann’s Areas. (B) Von Bonin and Bailey’s Areas. (C and D) Lateral and ventral views of Seltzer and Pandya’s parcellation showing the multimodal areas in the superior temporal sulcus. Subareas revealed in part C are generally NOT visible from the surface.

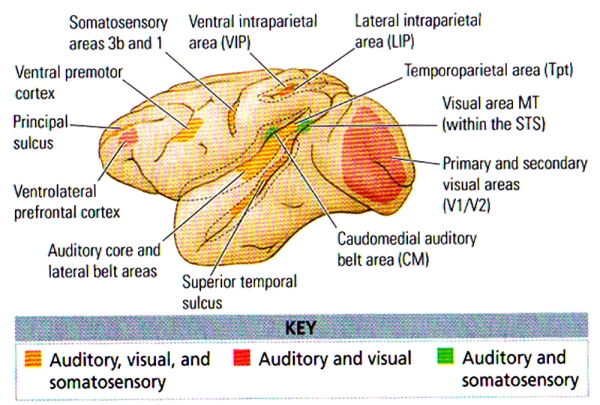

A huge amount of cortex can be found within the sulci of the temporal lobe as shown in the frontal views at the bottom of FIGURE B, particularly the lateral (Sylvian) fissure which contains the tissue forming the insula: an area that includes the gustatory cortex and the auditory association cortex. The Superior Temporal Sulcus (STS) divides the superior and middle temporal gyri, and also contains a fair amount of neocortex. FIGURE C. shows the many subregions of the Superior Temporal Sulcus, the multi-modal, or polymodal cortex that receives input from auditory, visual, and somatic regions, and from another two polymodal regions (frontal & parietal) along with the paralimbic cortex.

FIGURE D. Mutlisensory Areas in the Monkey Cortex | Coloured areas represent regions where anatomical or electrophysiological data or both types demonstrate multisensory interactions. Dashed lines represent open sulci. (After Ghazanfar and Schroeder, 2006.)

The medial temporal region (limbic cortex) includes the amygdala and the adjacent cortex (uncus), the hippocampus and surrounding cortex (subiculum, entorhinal cortex, perirhinal cortex), and the fusiform gyrus (see FIGURE B). The entorhinal cortex is Brodmann’s area 28, and the perirhinal cortex comprises Brodmann’s areas 35 and 36.

Cortical areas TH and TF at the posterior end of the temporal lobe (see FIGURE C) are often referred to as the parahippocampal cortex. The fusiform gyrus and the inferior temporal gyrus are functional parts of the lateral temporal cortex (see FIGURE A and FIGURE B).

Connections of the Temporal Cortex

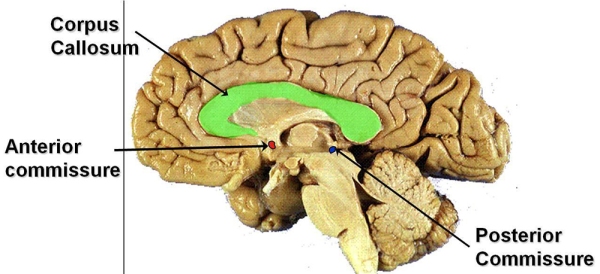

One major fact about the temporal lobes is that they are rich in internal connections, afferent projections from the sensory systems, and efferent projections to the parietal and frontal association regions, limbic system, and basal ganglia. The corpus callosum connects the neocortex of the left and right temporal lobes, whereas the anterior commissure connects connects the temporal cortex and the amygdala.

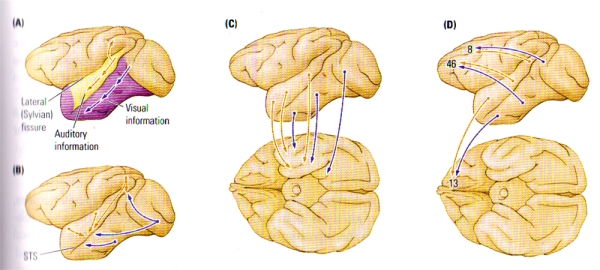

FIGURE E. Major Intracortical Connections of the Temporal Lobe | (A) Auditory and visual information progresses ventrally from the primary regions toward the temporal pole en route to the medial temporal regions. Auditory information also forms a dorsal pathway to the posterior parietal lobe. (B) Auditory, visual, and somatic outputs go to the multimodal regions of the superior temporal sulcus (STS). (C) Auditory and visual information goes to the medial temporal region, including the amygdala and the hippocampal formation. (D) Auditory and visual information goes to two prefrontal regions, one on the dorsolateral surface and the other in the orbital region (area 13).

Five distinct types of cortical-cortical connections have been revealed through studies on the temporocortical connections of the monkey (see FIGURE E), and each projection pathway subserves a particular function:

- A Hierarchical Sensory Pathway. This pathway is essential for stimulus recognition. The hierarchical progress of connections derives from the primary and secondary auditory and visual areas, ending in the temporal pole (see FIGURE E (A)). The visual projections form the ventral stream of visual processing , whereas the auditory projections form a parallel ventral stream of auditory processing.

- A Dorsal Auditory Pathway. Projecting from the auditory areas to the posterior parietal cortex (FIGURE E(A)), the pathway is analogous to the dorsal visual pathway and thus concerned with directing movements with respect to auditory information. The dorsal auditory pathway likely has a role to play in the detection of the spatial location of auditory inputs.

- A Polymodal Pathway. This pathway is a series of parallel projections from the visual and auditory association areas into the polymodal regions of the superior temporal sulcus (see FIGURE E(B)). The polymodal pathway seems to underlie the categorisation of stimuli.

- A Medial Temporal Projection. Vital for long-term memory, the projection from the auditory and visual association areas into the medial temporal, or limbic, regions goes first to the perirhinal cortex, then to the entorhinal cortex, and finally into the hippocampal formation or the amygdala or both (see FIGURE E(C)). The hippocampal projection forms the perforant pathway – disturbance of this projection leads to major dysfunction in hippocampal activity.

- A frontal-lobe projection. This series of parallel projections, necessary for various aspects of movement control, short-term memory, and affect, reaches from the temporal association areas to the frontal lobe (see FIGURE E(D)).

These five projection pathways play a unique and major role in temporal-lobe functions.

A Theory of Temporal Lobe Functions

The temporal lobe is multi-functional and comprises the primary auditory cortex, the secondary auditory and visual cortex, the limbic cortex, and the amygdala and hippocampus. The hippocampus works in combination with the object-recognition and memory functions of the neocortex and has a fundamental role in organising memories of objects in space. The amygdala is also responsible for adding affective tone (emotions) to sensory input and memories.

Based on the cortical anatomy, 3 basic sensory functions of the temporal cortex can be identified:

- Processing auditory input

- Visual object recognition

- Long-term storage of sensory input

Temporal-lobe functions are best explained by considering how the brain analyses and processes sensory stimuli as they enter the nervous system. A good example would be a hike in the woods where on a journey, one would notice a wide variety of birds. Furthering this example, let us assume that the individual on the hike decides to keep a mental list of all the birds encountered to report to his/her sister who happens to be an avid nature lover and birder. Now let us assume that the individual upon exploring has encountered a rattlesnake in the middle of his/her path; it is highly likely that he/she would change direction and look for birds in a safer location. Let us now consider the temporal-lobe functions engaged in such activity.

Sensory Processes

We shall use the hiking example above to explain the processes as we progress. In the case of birds of different types, the awareness of specific colours, shapes and sizes would be vital, and such a process involving object recognition is the function of the ventral visual pathway in the temporal lobe.

Speed is also of the essence in such natural situations since birds may not remain static for extended amounts of time, thus, we would tend to spot them fast from sighting to sighting (e.g. lateral view vs rear view). The development of categories for object types is vital to both perception and memory, and this depends on the inferortemporal cortex. The process of categorisation may also require some form of directed attention, since some aspects of a stimuli tend to play a more important role in the process of classification than do others [e.g. language, culture & speech in human beings].

For example, classifying two different types of yellow birds would require attention to be directed away from colour, to instead focus on shape, size and other individual characteristics. It has been revealed that damage to the temporal cortex leads to deficits in identifying and categorising stimuli. However, such a patient would have no difficulty in the location of stimulus or in recognising that the object is physically present, since these activities are functions of another part of the brain: the posterior parietal and primary sensory areas respectively.

As the individual would continue the journey to spot birds, he/she may also hear a bird song, and this stimulus would also have to be matched with the visual input. This process of matching visual and auditory information is known as cross-modal matching, and likely depends on the cortex of the superior temporal sulcus.

As the journey progresses, the individual may come across more and more birds which would require the formation of memory for later retrieval of their specificity. Furthermore, as the birds vary, their respective names would have to be accessed from memory; these long-term memory processes depend on the entire ventral visual stream as well as the paralimbic cortex of the medial temporal region.

Affective Responses

Image: A Grand Canyon Pink Rattlesnake

Using the encounter with the snake as an example, the individual would first hear the rattle, which is an alert of the reptilian danger, and stop. Next, the ground would have to be scanned visually to spot the venomous creature, to identity it while dealing with a rising heart rate and blood pressure. The affective response in such a situation would be the function of the amygdala. The association of sensory input (stimulus) and emotion is crucial for learning, because specific stimuli become associated with their positive, negative or neutral consequences, and behaviour is shaped/modified accordingly.

If such an affective system was to be cancelled out from a person’s brain, all stimuli would be treated equally – consider the consequences of failing to associate a rattlesnake, which is venomous, with the consequences of being bitten. Furthering the example, consider an individual who is unable to associate good & positive feelings (such as honesty, warmth, trust & human love) to a specific person.

Laboratory animals with amygdala lesions/damage generally become extremely placid and lack any form of emotional reaction to threatening stimuli. For example, monkeys that were formerly terrified of snakes become indifferent to them [and of the fatal consequences] and may reach and pick them up.

Spatial Navigation

When the decision to change directions is made by the individual, the hippocampus becomes active and it contains cells that code places in space that allow us to navigate in space and remember our position [location].

As the general functions of the temporal lobes [sensory, affective & navigational] are considered it is fairly obvious how devastating the consequences on behaviour would be for a person who loses them: the inability to perceive or remember events, including language and loss of affect. However, such a person lacking temporal-lobe function would still be able to use the dorsal visual system to make visually guided movements and under many circumstances, would shockingly appear completely normal to many.

The Superior Temporal Sulcus & Biological Motion

The hiking example above has lacked an additional temporal-lobe function, a process that most animals engage in known as biological motion: movements that have particular relevance to a particular species. For example, among humans in Western Europe, many movements involving the eyes, face, mouth, hands and body have social meanings – the superior temporal sulcus analyses biological motion.

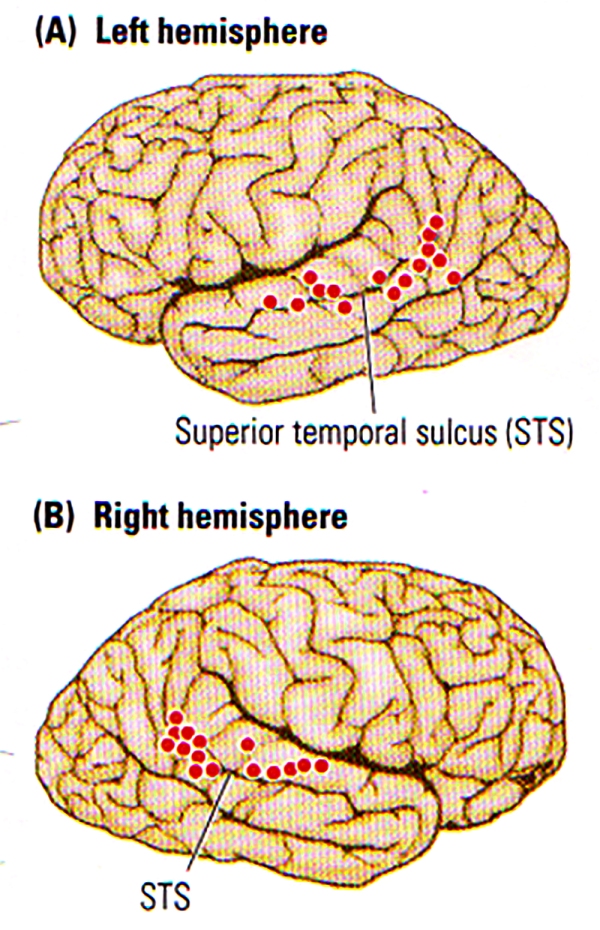

FIGURE F. Biological Motion | Summary of the activation (indicated by dots) of the Superior Temporal Sulcus (STS) region in the left (A) and right (B) hemispheres during the perception of biological motion. (After Allison, Puce, and McCarthy, 2000.)

The STS plays a role in categorising stimuli from received multimodal inputs. One major category is social perception, which involves the analysis and response of actual or implied bodily movements that provide socially relevant information about a person’s actual state. Such information has an important role to play in social cognition, or “Theory of Mind”, that allows us to develop hypotheses about another individual’s intentions. For example, the direction of an individual’s gaze provides some information about what that person is attending (or not attending) to.

In a review, Truett Allison and colleagues proposed that cells in the superior temporal sulcus have a key role to play in social cognition. For example, cells in the monkey STS respond to various forms of biological motion including the direction of eye gaze, facial expression, mouth movement, head movement and hand movement.

In the case of advanced social animals such as primates, the ability to understand and respond to biological motion is critical information needed to infer the intention of others. As shown in FIGURE F , imaging studies revealed the activation along the STS during the perception of a variety of biological motion.

One major correlate of mouth movements is vocalisation, and so it is possible to predict that regions of the STS are also implicated in perceiving the specific sounds of a particular species. In monkeys for example, cells in the Superior Temporal Gyrus, which is adjacent to the STS and sends connections to it, show a preference for “monkey calls”. In humans too, imaging studies have revealed that the superior temporal gyrus is activated by both human vocalisations and by melodic sequences.

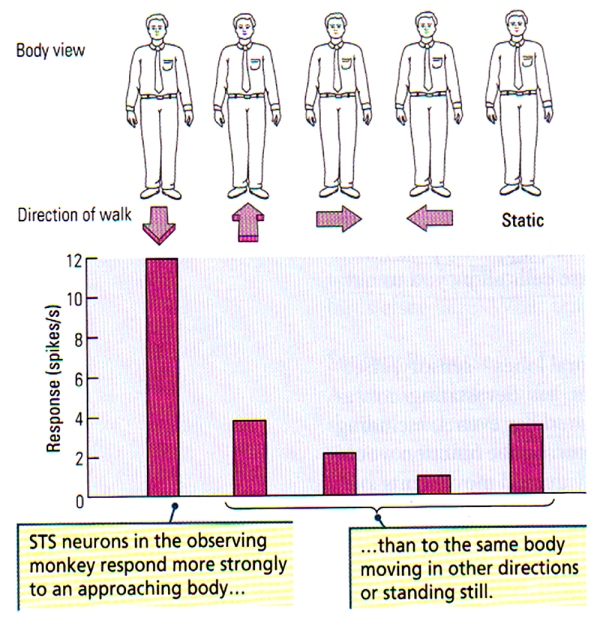

The activation in some part of the superior temporal sulcus in response to a combination of visual stimulus (mouth movements) and talking or singing could be predicted, and presumably sophisticated speech and vocal performances (singing) are perceived as complex forms of biological motion. Hence, it is fairly obvious that people with temporal-lobe injuries that lead to impairments in the analysis of biological motion will likely be correlated with deficit in social awareness/judgement. Indeed, the studies of David Perrett and his colleagues illustrate the nature of processing in the STS, who revealed that neurons in the superior temporal sulcus may be responsive to particular faces viewed head-on, faces viewed in profile, the posture of the head, or even the specific facial expressions. Perrett also found that some STS cells are extremely sensitive to primate bodies that move in a particular direction, another characteristic biological motion (see FIGURE G below). Such finding is quite remarkable since the basic configuration of the primate stimulus remains identical as it moves in different directions; solely the direction changes.

FIGURE G. Neuronal Sensitivity to Direction of Body Movements | (Top) Schematic representation of the front view of a body. (Bottom) The histogram illustrates a greater neuronal response of STS neurons to the front view of a body that approaches the observing monkey compared with the responses to the same view of the body when the body is moving away, to the right and to the left, or is stationary. (After Perrett et al., 1990.)

Visual Processing in the Temporal Lobe

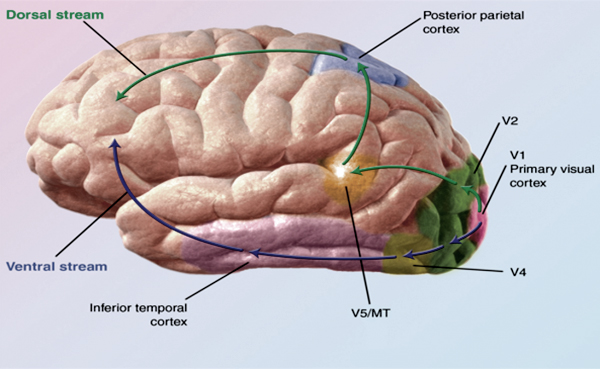

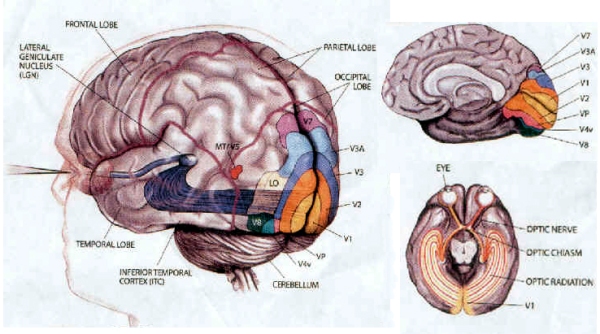

All visual information goes through the Lateral Geniculate Nucleus (LGN) which is part of the thalamus. The LGN directs visual information into the brain where most of it is sent straight to the occipital cortex/lobe. The dorsal and ventral streams are primary pathways to visual cortex V1 located around the calcarine fissure in the occipital lobe [V1 is critical for sight, loss leads to blindness]. It is believed that human beings possess two distinct visual systems.

When visual information leaves the occipital lobe (visual cortex), it follows two streams:

1) The Ventral Stream begins with V1 and passes through vision region V2, then V4 and to the inferior temporal cortex. It is known as the “What Pathway” and is responsible for processes related to form recognition and object representation; and is also linked to the formation of long-term memory. The ventral stream is associated to a concept of “vision in the brain”, which allows humans to make sense of the visual information they receive. Vartanian & Skov (2014) have recently found activity in the anterior insula [emotion experiencing part] and in the ventral stream when viewing art paintings. Sustained damage to the ventral stream would allow a subject to see, perceive colours, movements, understand the underlying expectation of meaning to an object or face; but yet fail to perceive “what” the object/face is. This condition is known as agnosia which means the “failure to know”; where patients lose the ability to identify by sight but have no difficulties with memory for word or descriptive language.

Visual agnosia appears to be the result of not a primary vision problem but an associative function in the brain to give definition.

Visual agnosia appears to be the result of not a primary vision problem but an associative function in the brain to give definition.

Lissauer (1890) defined 2 types of visual agnosias; apperceptive visual agnosia and associative visual agnosia.

In the apperceptive type subjects cannot identify, draw, copy but identify the object upon touch (Benson and Greenberg, 1969). In associative visual agnosia, subjects can “perceive” the object but cannot associate it with correct vocabulary; showing that the knowledge is present along with touch recognition and verbal description but not object identification; although they can copy even if extensive time is taken on simple figures.

2) The Dorsal Stream also known as the “where” stream begins with V1, goes through vision region V2, then through the dorsomedial area and V5, then to the posterior parietal cortex. Known as the “Where” or “How” Pathway it is believed to play a major part in the processing of motion, location of particular objects in the viewer’s range, fine motor controls of the arms and eyes. Damage to the dorsal stream disrupts visual spatial perception and visually guided behaviour; but not conscious visual perception.

The famous case of A.T the woman who could not grasp unfamiliar objects seen had her dorsal route interrupted due to a lesion of the occipitoparietal region. She was able to recognise objects & demonstrate size with fingers but was incorrect in object directed movements along with ability to properly grip with her fingers; instead tried grabbing awkwardly with bad finger synchronisation.

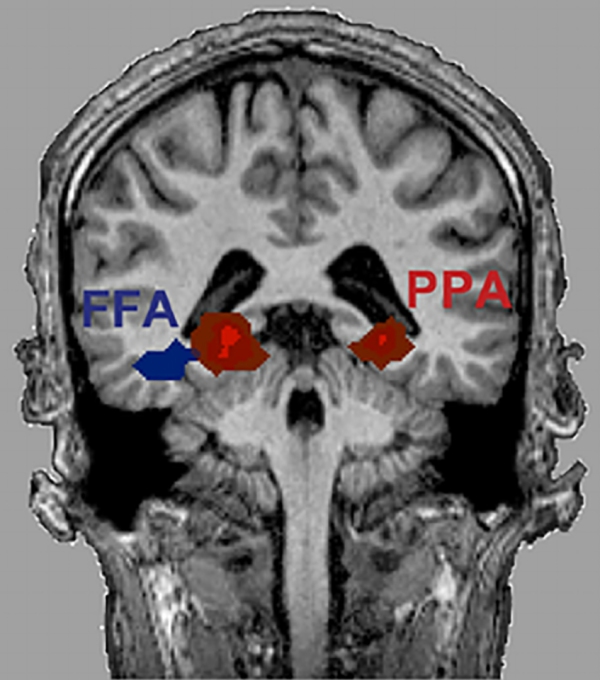

FFA [Fusiform Face Area] & PPA [Parahippocampal Place Area]

The selective activation of the FFA [Fusiform Face Area] an the PPA [Parahippocampal Place Area] related to categories of visual stimulation that include a wide range of different exemplars of the specific categories raises the interesting question of how such dissimilar objects could be treated equivalently by specialised cortical regions. Different views of the same object are not only linked together as being the same, but different objects appear to be linked together as being part of the same category as well. Such an automatic categorisation of sensory information has to be partially learned since most humans categorise unnatural objects such as cars or furniture; the brain is unlikely to be innately designed for such categorisations.

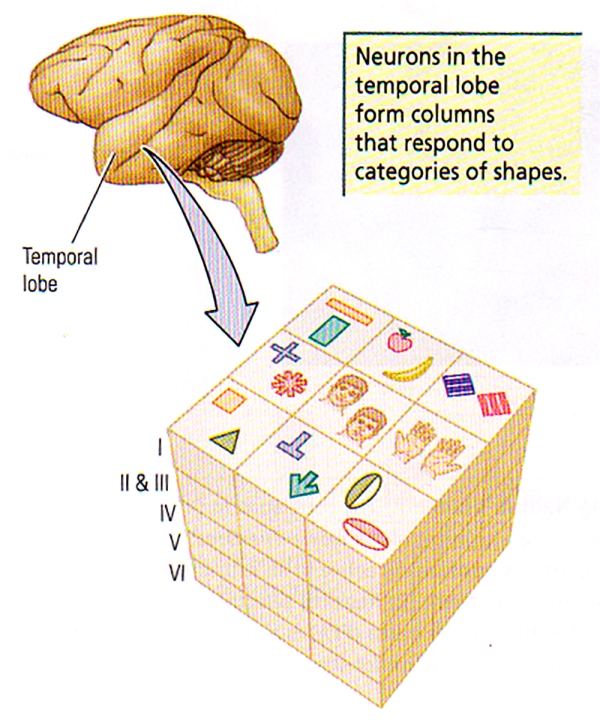

To understand how the brain learns such processes, researchers have looked for changes in neuronal activity as subjects learn categories. Kenji Tanaka started by attempting to determine the critical features for activating neurons in the monkey inferotemporal cortex. Tanaka and his colleagues presented a range of three-dimensional animal and plant representations to find the effective stimuli for specific cells, then they tried to determine the necessary and sufficient properties of theses cells. They found that most cells in the TE (see FIGURE C) require complex features for activation such as orientation, size, colour and texture.

FIGURE H. Columnar Organisation in Area TE | Cells with similar but slightly different selectivity cluster in elongated vertical columns, perpendicular to the cortical surface.

As shown in FIGURE H, Tanaka has found that cells with similar, although slightly different selectivity, tend to cluster vertically in columns. These cells were not similar in their stimulus selectivity; so an object is likely represented not by the the activity of a single cell but rather by the activity of many cells within a columnar module.

Two remarkable features of the inferotemporal neurons in monkeys have also been described by Tanaka and others. First, the stimulus specificity of these neurons is altered by experience. In a period of one year, monkeys were trained to discriminate 28 complex shapes. The stimulus preferences of inferotemporal neurons were then determined from a larger set of animal and plant models. Among the trained monkeys, 39% of the inferotemporal neurons gave a maximum response to some of the stimuli used in the training process, compared with only 9% of the neurons in the naïve monkeys.

Traduction(EN): “”If we can train lesser primates to change their perception, going as far as to alter their neuronal physiology, then I have strong hope for human beings.” -Danny D’Purb

These results confirm that the temporal lobe’s role in visual processing is not fully determined genetically but is subject to experience even in adults. It can be speculated that such experience-dependent characteristics allows the visual system to adapt to different demands in a changing visual environment. This is a feature important for human visual recognition abilities that have demands in forests that greatly differ from those on open plains or in urban environments. Furthermore, experience-dependent visual neurons ensure that we can identify visual stimuli that were never encountered in the evolution of the human brain.

The second interesting feature of inferotemporal neurons is that they may not only process visual input but also provide a mechanism for the internal representation of the images of objects. Joaquin Fuster and John Jervey demonstrated that, if monkeys are shown specific objects that are to be remembered, neurons in the monkey cortex continue to discharge during the “memory” period. Such selective discharges of neurons may provide the basis for visual imagery, i.e. the discharge of groups of neurons that are selective for characteristics of particular objects may create a mental image of the object in its absence.

Could human faces be special?

“La Joconde” par Léonard de Vinci (1503 – 1519)

Most humans on earth spend more time in the analysis of faces that any other single stimulus. Infants tend to look at faces from birth while adults are particularly skilled at identifying faces despite large variations in the expressions and viewing angles, even when the faces are modified visually [with beards, spectacles, or hats]. Faces also have an incredible number of muscles to convey a wealth of social information, and humans are unique among all primate species in spending a great deal of time in looking directly at a wide range of faces from other members of our species on earth. The importance of faces as visual stimuli has led to the assumption that special pathways exist specifically for human faces, and several lines of evidence support the view.

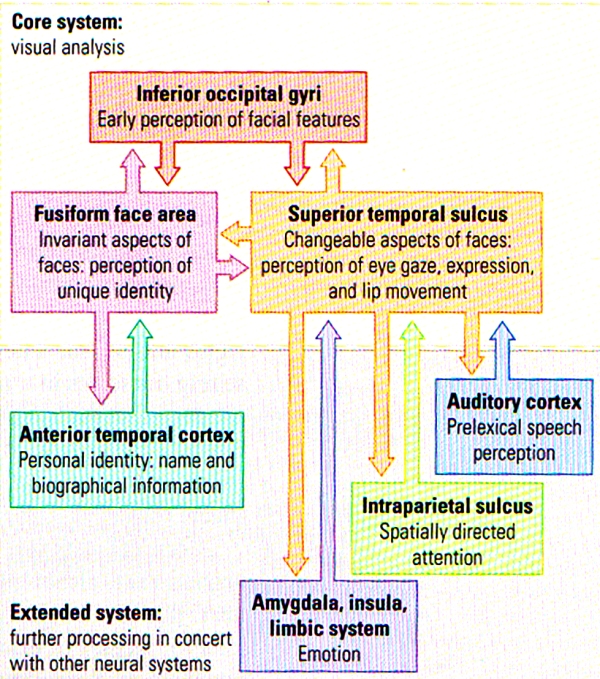

FIGURE I. A Model of Distributed Human Neural System for Face Perception | The model is divided into a core system (TOP), consisting of occipital and temporal regions, and an extended system (BOTTOM), including regions that are part of neural systems for other cognitive functions. (After Haxby, Hoffman, and Gobbini, 2000.)

The face-perception system is extensive and includes regions in the occipital lobe as well as several different regions of the temporal lobe. Figure I above summarises a model by Haxby and his colleagues in which different aspects of facial perception (such as facial perception VS identity) are analysed in core visual areas in the temporal part of the visual stream. This model has also included other cortical regions as an “extended system” that includes the analysis of other facial characteristics such as emotion and lip reading. The key point to note is that the analysis of human faces is unlike any other stimuli: faces may indeed be special objects to the brain. A clear asymmetry exists in the role of the temporal lobes in facial analysis: right temporal lesions/damage have a greater effect on facial processing that do similar left temporal lesions/damage. Even in normal subjects, researchers have noted the asymmetry in face perception.

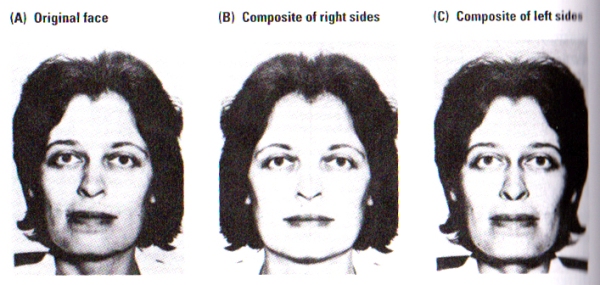

FIGURE J. The Split-Faces Test | Subjects were asked which of the two pictures, B or C, most closely resembles picture A. Control subjects chose picture C significantly more often than picture B. Picture C corresponds to that part of picture A falling in a subject’s left visual fied. The woman pictured chose B, closer to the view that she is accustomed to seeing in the mirror. (After Kolb, Milner, and Taylor, 1983).

Photographs of faces as illustrated in FIGURE J, were presented to subjects. Photographs B and C are composites of the right or left sides, respectively, of the original face shown in Photograph A. When asked to identify the composite most similar to the original face, normal subjects consistently matched the left side of photograph A to its composite in photograph C. Participants did so whether the photographs were presented inverted or upright. Furthermore, patients with either right temporal or right parietal removals failed to consistently match either side of the face in either the inverted or upright scenario.

These results of the split-faces do not simply provide evidence for asymmetry in facial processing but also raises the issue of the nature of our perceptions of our own faces. Self-perception seems to provide a unique example of visual perception, since the image of our face tends to come from the mirror whereas the image that the world has of our face comes from each individuals direct view, and the inspection of FIGURE J illustrates the implications of this difference.

Photograph A is the image that most people perceive of the female subject shown above. Since humans have a left-visual-field bias in their perception, most right-handers choose photograph C as the picture most resembling the original A. However, upon asking the female subject in the photograph to choose the photograph most resembling her, she chose photograph B, as her common view of herself in the mirror seemed to match her choice although it is the reverse of most other people.

This intriguing consequence is the simple result of most people’s biased self-facial image of their opinion of personal photographs. Members of the general public tend to complain about their photographs not being photogenic, that their photographs are never taken at the correct angle, and other complaints about the image. The truth is that the problem may be rather different: people are accustomed to seeing themselves in the mirror and hence when a photograph is presented, most are biased to look at the side of the face that is not normally perceived selectively in the mirror, hence the person has a glimpse of himself/herself from the eyes of the rest of the world. Indeed people tend to not see themselves as others see them – the greater the asymmetry of a human face, the less flattering the person will see his or her image to be.

One major critical question about facial processing and the FFA remains however. Some researchers have argued that although face recognition appears to tap into a specialised face area, the exact same region could be used for other forms of expertise and is not specific for faces. For example, imaging studies have revealed that real-world experts show an overlapping pattern of activation in the FFA for faces in control participants, for car stimuli in car experts, and for bird stimuli in bird experts. The main scientific view is that the FFA is fairly plastic as a consequence of perceptual experience and training, and is innately biased to categorise complex objects such as faces but can also be recruited for other forms of visual categorisation expertise.

Study: Does your face tell people how healthy you are? / Henderson, A., Holzleitner, I., Talamas, S. and Perrett, D. (2016). Perception of health from facial cues. Philosophical Transactions of the Royal Society B: Biological Sciences, 371(1693), p.20150380.

Auditory Processing in the Temporal Lobe

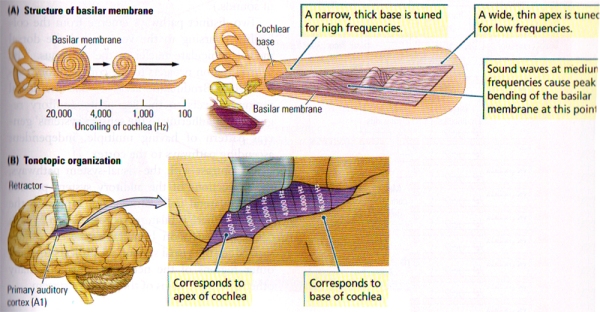

A cascade of mechanical and neural events in the cochlea, the brainstem, and, eventually, the auditory cortex that results in the percept of sound is stimulated whenever a sound reaches the ear. Similarly to the visual cortex, the auditory cortex has multiple regions, each of which has a tonotopic map.

Although the precise functions of these maps are still to be fully understood, the ultimate goal lies in the perception of sound objects, the localisation of sound, and the decision about movements in relation to sounds. A great amount of cells in the auditory cortex respond only to specific frequencies, and these are often referred to as sound pitches or to multiples of those frequencies. Two of the main and most important types of sound for humans are music & language.

Speech Perception

Unlike any other auditory input, human speech differs in three fundamental ways.

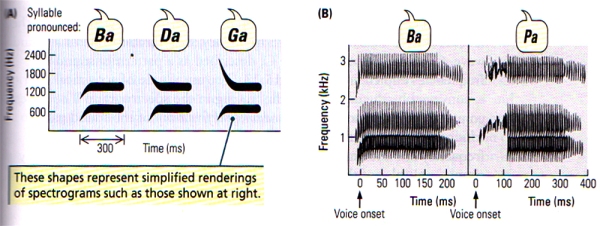

- Speech sounds come mainly from three restricted ranges of frequencies, which are known as formants. FIGURE K(A) shows sound spectrograms of different two-formant syllables. The dark bars indicate the frequency bands seen in more detail in FIGURE K(B), which shows that the syllables differ both in the onset frequency of the second (higher) formant and in the onset time of the consonant. Notice that vowel sounds are in a constant frequency band, but consonants show rapid changes in frenquency.

- The similar speech sounds vary from one context in which they are heard to another, yet all are perceived as being the same. Thus, the sound spectrogram of the letter “d” in English is different in the words “deep”, “deck” and “duke”, yet a listener perceives all of them as “d”. The auditory system must have a mechanism for categorising varying sounds as being equivalent, and this mechanism must be affected by experience because a major obstacle to learning a new language in adulthood remains the difficulty of learning equivalent sound categories. Thus, a word’s spectrogram depends on the context – the words that precede and follow it (there may be a parallel mechanism for musical categorisation).

- Speech sounds also change very rapidly in relation to one another, and the sequential order of the sounds is critical to understanding. According to Alvin Liberman, humans can perceive speech at rates of as many as 30 segments per second. Speech perception at the higher rates is truly astonishing, because it far exceeds the auditory system’s ability to transmit all the speech as separate pieces of auditory information. For example, non-speech noise is perceived as a buzz at a rate of only about 5 segments per second.It seems fairly obvious that the brain must recognise and analyse language sounds in a very special way, similar to the echolocation system of the bat which is specialised in the bat brain. It is highly probable that the special mechanism for speech perception is located on the left temporal lobe. This function may not be unique to humans, since the results of studies in both monkeys and rats show specific deficits in the perception of species-typical vocalisations after left temporal lesions.

FIGURE K. Speech Sounds | (A) Schematic spectrograms of three different syllables, each made up of two formants. (B) Spectrograms of syllables differing in voice onset time. (After Springer, 1979.)

Music Perception

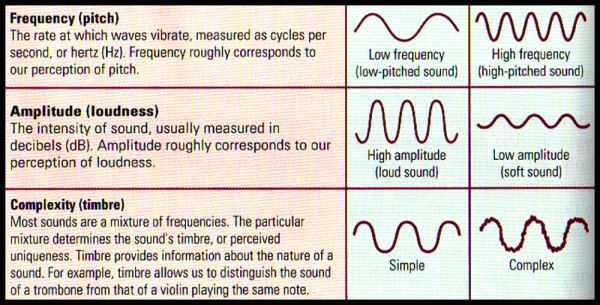

Music is different from language since it relies on the relations between auditory elements rather than on individual elements. And a tune is not defined by the pitches of the tones that constitute it but by the arrangement of the pitches’ duration and the intervals between them. Musical sounds may differ from one another in three major aspects: pitch (frequency), loudness (amplitude) and timbre (complexity).

FIGURE L. Breaking Down Sound | Sound waves have 3 physical dimensions – frequency (pitch) amplitude (loudness) & timbre (complexity) – that correspond to the perceptual dimensions

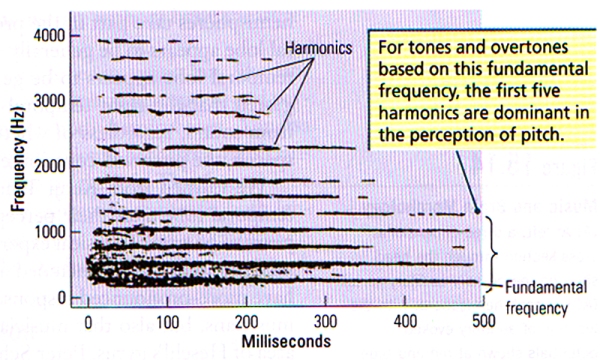

- Pitch (Frequency) refers to the position of a sound on the musical scale as perceived by the listener. Pitch is very clearly related to frequency: the vibration rate of a sound wave. Let us take for example, middle C, described as a pattern of sound frequencies depicted in FIGURE M. The amplitude of the acoustical energy is conveyed by the darkness of the tracing in the spectrogram. The lowest component of this note is the fundamental frequency of the sound pattern, which is 264 Hz, or middle C. Frequencies above the fundamental frequency are known as overtones or partials. The overtones are generally simple multiples of the fundamental (for example, 2 x 264, or 528 Hz; 4 x 264, or 1056 Hz), as shown in FIGURE M. Overtones that are multiples of the fundamental freqency are known as harmonics.

- Loudness (Amplitude) refers to the magnitude of a sensation as judged by a given person. Loudness, although related to the intensity of a sound as measured in decibels, is in fact a subjective evaluation described by simple terms such as “very loud”, “soft”, “very soft” and so forth.

- Timbre (Complexity) refers to the individual and distinctive character of a sound, the quality that distinguishes it from all other sounds of similar pitch and loudness. For example, we can distinguish the sound of a guitar from that of a violin even thought they may play the same note at a similar loudness.

FIGURE M. Spectrographic Display of the Steady-State Part of Middle C (264 Hz) Played on Piano | Bands of acoustical energy are present at the fundamental frequency, as well as at integer multiples of the fundamental (harmonics). (After Ritsma, 1967)

If the fundamental frequency is cancelled out from a note by the means of electronic filters, the overtones are sufficient to determine the pitch of the fundamental frequency – a phenomenon known as periodicity pitch.

The ability to determine pitch from the overtones alone is likely due to the fact that the difference between frequencies of various harmonics is equal to the fundamental frequency (for example, 792 Hz – 528 Hz = 264 Hz = the fundamental frequency). The auditory system can determine this difference, and hence one perceives the fundamental frequency.

One major aspect of pitch perception is that, although we can generate (and perceive) the fundamental frequency, we still perceive the complex tones of the harmonics, and this is known as the spectral pitch. When individual subjects are made to listen to complex sounds to then be asked to make judgements about the direction of shifts in pitch, some individuals base their judgement on the fundamental frequency and others on the spectral pitch. This difference from one to the other is not based or related to musical training but rather to a basic difference in temporal-lobe organisation. The primary auditory cortex of the right temporal lobe appears to make this periodicity-pitch discrimination.

1885 – 1886 – The Beginner (Margaret Perry) by Elisabeth (Lilla) Cabot Perry

Robert Zatorre (2001) found that patients with right temporal lobectomies that include the removal of primary auditory cortex (area 41 or Heschl’s gyrus) are impaired at making pitch discriminations when the fundamental frequency is absent but are normal at making such discriminations when the fundamental frequency is present, however their ability to identify the direction of the pitch change was impaired.

Timing is a critical component of good music, and two types of time relations are fundamental to the rhythm of musical sequences:

(i) The segmentation of sequences of pitches into groups based on the duration of the sounds

(ii) The identification of temporal regularity, or beat, which is also professionally known as meter.

Both of these two components could be dissociated by having the subjects tap a rhythm versus keeping time with the beat (such as the spontaneous tapping of the foot to a strong beat)

Robert Zatorre and Isabelle Peretz came to the conclusion after analysing studies of patients with temporal-lobe injuries as well as neuroimaging studies, that the left temporal lobe plays a major role in temporal grouping for rhythm, while the right temporal lobe plays a complementary role in meter (beat). However, the researchers also observed that a motor component of rhythm is also present, and it is broadly distributed to include the supplementary motor cortex, premotor cortex, cerebellum, and basal ganglia.

In seems clear that music is much more than the perception of pitch, rhythm, timbre and loudness. Zatorre and Peretz reviewed the many other features of music and the brain, including faculties such as music memory, emotion, performance (both singing and playing), music reading, and the effect of musical training. The importance of memory to music is inescapable since music unfolds over time for one to perceive a tune.

The retention of melodies is much more affected by injuries to the right temporal lobe, although injury to either temporal lobe impairs the learning of melodies. While both hemispheres contribute to the production of music, the role of the right temporal lobe appears to be greater in the production of melody, and the left temporal lobe appears to be mostly responsible for rhythm. Zatorre (2001) proposed that the right temporal lobe should have a special function in extracting pitch from sound, regardless of whether the sound is speech or music. However, when processing speech, the pitch (frequency) will contribute to the “tone” of the voice, and this is known as prosody.

Image: Solfège en France [La formation musicale, autrefois appelée le solfège, est une spécificité française] / FranceMusique

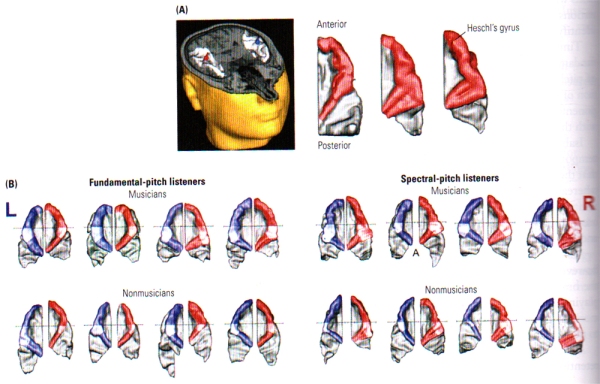

FIGURE N. Music and Brain Morphology | (A) At left, a three dimensional cross section through the head showing the primary auditory cortex (AC) in each hemisphere, with the location of auditory evoked potentials shown at red and blue markers. At right, reconstructed dorsal views of the right auditory cortical surface showing the difference in morphology among three people. Heschl’s gyrus is shown in red. (B) Examples from individual brains of musicians (top row) and non-musicians (bottom row) showing the difference in morphology between people who hear fundamental frequency and those who hear spectral pitch. Heschl’s gyrus is bigger on the left in the former group and bigger on the right in the latter group. Note: Heschl’s gyrus is bigger overall in the musicians. (From: Schneider, Sluming, Roberts, Scherg, Goebel, Specht, Dosch, Bleeck, Stippich and Rupp, 2005).

These gray matter differences are positively correlated with musical proficiency, i.e. the greater the gray-matter volume, the greater the musical ability. It has also been revealed that fundamental-pitch listeners exhibit a pronounced leftward asymmetry of gray-matter volume in Hechl’s gyrus, whereas spectral-pitch listeners have a rightward asymmetry, independent of musical training (see FIGURE N (B)). The results of these studies from Schneider imply that innate differences in brain morphology are related to the way in which pitch is processed and that some of the innate differences are related to musical ability. Practice and experience with music seem likely to be related to anatomical differences in the temporal cortex as well, however the relation may be difficult to demonstrate without brain measurements before and after intense training in music.

Image: Une Saison à l’Opéra

Although the role of the temporal lobes in music is vital [similar to language which is also distributed in the frontal lobe], music perception and performance also include the inferior frontal cortex in both hemispheres. Sluming et al. (2002) have demonstrated that professional orchestral musicians have significantly more gray matter in Broca’s area on the left. Such frontal-lobe effect may be related to similarities in aspects of expressive output in both language and music. The main point however, is that music likely has widespread effects on the brain’s morphology and function that science has only started to unravel.

This bone flute found in Hohle Fels cave is believed to be around 43, 000 years old and comes as evidence that, like modern humans, Neanderthals likely had complementary hemispheric specialisation for music and language, which means that these abilities seem to have biological & evolutionary roots. While this assumption seems obvious for language, it comes as less obvious for music, which has often been perceived as an artifact of culture. However considerable evidence suggests that humans are born with a predisposition for music processing. Young infants display learning preferences for musical scales and are biased towards perceiving the regularity (such as harmonics) on which music is built. One of the strongest evidence for favouring the biological basis of music is that a surprising number of humans are tone deaf, a condition known as congenital amusia. It is believed that these amusic types of humans have an abnormality in their neural networks for music, and no amount of musical training leads to a cure. [Credit: Jensen / University of Tubingen]

Asymmetry of Temporal-Lobe Function

Epileptiform abnormalities have often been linked to sensitive temporal lobes, and the surgical removal of the abnormal temporal lobe tends to benefit patients suffering from epilepsy. These surgical cases have also allowed neuropsychologists to study the complementary specialisation of the right and left temporal lobes.

From a comparison of the effects of right and left temporal lobectomy by Brenda Milner and her colleagues, it has been revealed that specific memory defects vary depending on the side of the lesion. Deficits in non-verbal memory (e.g. faces) is associated to damage to the right temporal lobe, and deficits in verbal memory to the left temporal lobe.

In a similar sense, right temporal lesions would be associated with deficits in processing certain aspects of music, while left temporal lesions would be associated with deficits in processing speech sounds. However, much remains to be learnt and discovered regarding the relative roles of the left and right temporal lobes in social and affective behaviour. Right, but not left, temporal-lobe damage/lesions lead to impairments in the recognition of faces and facial expressions; so it seems fairly obvious that these two sides play different roles in social cognition. From experience, clinical cases suggest that left and right temporal lobe lesions have different effects on personality. Liegeois-Chauvel and colleagues studied musical processing in large groups of patients with temporal lobectomies, and confirmed that injury to right superior temporal gyrus impairs various aspects of processing necessary for the discrimination of melodies. Furthermore, a dissociation between the roles of the posterior and anterior regions of the superior temporal gyrus on different aspects of music processing suggest their relative localisation within the superior temporal gyrus.

Hence, it would be incorrect to assume that the removal of both temporal lobes merely doubles the symptoms of damage seen in unilateral temporal lobectomy. Bilateral temporal-lobe removal produces dramatic effects on both memory and affect that are orders of magnitude greater than those observed subsequent to unilateral lesions.

*****

References

- Fuster, J.M. & Jervey, J.P. (1982). Neuronal firing in the inferotemporal cortex of the monkey in a visual memory task. Journal of Neuroscience. 2, 361-375

- Kolb, B. and Whishaw, I. (2009). Fundamentals of human neuropsychology. NY: Worth Publishers

- Liegeois-Chauvel, C., Peretz, I., Babai, M., Laguitton, V., and Chauvel, P. (1998). Contribution of different cortical areas in the temporal lobes to music processing. Brain. 121, 1853-1867.

- Perrett, D. I., Harries, M. H., Benson, P. J., Chitty, A. J. & Mistlin, A. J. (1990). Retrieval of structure from rigid and biological motion: An analysis of the visual responses of neurones in the macaque temporal cortex. In A. Blake & T. Troscianko, Eds. AI and the Eye. New York: Wiley

- Tanaka, J. W. (2004). Object categorisation, expertise and neural plasticity. In M.S. Gazzaniga, Ed. The Cognitive Neurosciences III, 3rd ed. Cambridge, Mass.: MIT Press

- Tanaka, K. (1993). Neuronal Mechanism of object recognition. Science, 262, 685-688

Danny J. D’Purb | DPURB.com

____________________________________________________

While the aim of the community at dpurb.com has been & will always be to focus on a modern & progressive culture, human progress, scientific research, philosophical advancement & a future in harmony with our natural environment; the tireless efforts in researching & providing our valued audience the latest & finest information in various fields unfortunately takes its toll on our very human admins, who along with the time sacrificed & the pleasure of contributing in advancing our world through sensitive discussions & progressive ideas, have to deal with the stresses that test even the toughest of minds. Your valued support would ensure our work remains at its standards and remind our admins that their efforts are appreciated while also allowing you to take pride in our journey towards an enlightened human civilization. Your support would benefit a cause that focuses on mankind, current & future generations.

Thank you once again for your time.

Please feel free to support us by considering a donation.

Sincerely,

The Team @ dpurb.com

P.S.

– If you are a group/organization or individual looking for consultancy services, email: info[AT]dpurb.com

– If you need to reach Danny J. D’Purb directly for any other queries or questions, email: danny[AT]dpurb.com [Inbox checked periodically / Responses may take up to 20 days or more depending on his schedule]

Stay connected by linking up with us on Facebook and Twitter

Developmental Psychology: The 3 Major Theories of Childhood Development

In 1984, Nicholas Humphrey described us as “nature’s psychologists’” or homo psychologicus. What he meant was that as intelligent social beings, we tend to use our knowledge of our own thoughts and feelings – “introspection” – as a guide for understanding how others are likely to think, feel and hence, behave. He also argued that we are conscious [i.e. we have self-awareness] precisely because such an attribute is useful in the process of understanding others and having a successful social existence – consciousness is a biological adaptation that enables us to perform introspective psychology. Today, we are confident in the knowledge that the process of understanding others’ thoughts, feelings and behaviour is an ability that develops through childhood and most likely throughout our lives; and according to the greatest child psychologist of all time, Jean Piaget, a crucial phase of this process occurs in middle childhood.

Developmental psychology can be characterised as the field that attempts to understand and explain the changes that happen over time in the thought, behaviour, reasoning and functioning of a person due to biological, individual and environmental influences. Developmental psychologists study children’s development, and the development of human behaviour across the organism’s lifetime from a variety of different perspectives. Hence, if we are studying different areas of development, different theoretical perspectives will be fundamental and may influence the ways psychologists and scholars think about and study development.

Through the systematic collection of knowledge and experiments, we can develop a greater understanding and awareness of ourselves than would otherwise be possible…

Full Article: https://dpurb.com/2018/07/15/developmental-psychology-the-3-major-theories-of-development/

__________________________________________

Mapping Aesthetic Musical Emotions in the Brain

Abstract:

Music evokes complex emotions beyond pleasant/unpleasant or happy/sad dichotomies usually investigated in neuroscience. Here, we used functional neuroimaging with parametric analyses based on the intensity of felt emotions to explore a wider spectrum of affective responses reported during music listening. Positive emotions correlated with activation of left striatum and insula when high-arousing (Wonder, Joy) but right striatum and orbitofrontal cortex when low-arousing (Nostalgia, Tenderness). Irrespective of their positive/negative valence, high-arousal emotions (Tension, Power, and Joy) also correlated with activations in sensory and motor areas, whereas low-arousal categories (Peacefulness, Nostalgia, and Sadness) selectively engaged ventromedial prefrontal cortex and hippocampus. The right parahippocampal cortex activated in all but positive high-arousal conditions. Results also suggested some blends between activation patterns associated with different classes of emotions, particularly for feelings of Wonder or Transcendence. These data reveal a differentiated recruitment across emotions of networks involved in reward, memory, self-reflective, and sensorimotor processes, which may account for the unique richness of musical emotions.

Trost, W., Ethofer, T., Zentner, M. and Vuilleumier, P. (2011). Mapping Aesthetic Musical Emotions in the Brain. Cerebral Cortex, 22(12), pp.2769-2783.

__________________________________________

Pourquoi tout le monde n’a pas l’oreille absolue

Extrait:

En musique, l’oreille absolue, c’est la capacité de nommer les notes dès qu’on les entend. Contrairement à l’oreille relative, qui désigne la faculté de reconnaître une note à partir d’une note de référence, l’oreille absolue est très rare. En occident, elle concernerait une personne sur dix mille. Pourquoi certaines personnes ont-elles l’oreille absolue, et d’autres pas ? Que se passe-t-il dans le cerveau des personnes qui en sont dotées ? Comment acquiert-on cette capacité ? Peut-on l’apprendre adulte ? Eléments de réponse sur ce qui demeure encore un mystère scientifique, à l’occasion de la Fête de la musique 2018.

Article: https://www.lemonde.fr/sciences/video/2018/06/21/pourquoi-tout-le-monde-n-a-pas-l-oreille-absolue_5318711_1650684.html

__________________________________________

Gray- and White-Matter Anatomy of Absolute Pitch Possessors

Dohn, A., Garza-Villarreal, E., Chakravarty, M., Hansen, M., Lerch, J. and Vuust, P. (2013). Gray- and White-Matter Anatomy of Absolute Pitch Possessors. Cerebral Cortex, 25(5), pp.1379-1388.

Abstract:

Absolute pitch (AP), the ability to identify a musical pitch without a reference, has been examined behaviorally in numerous studies for more than a century, yet only a few studies have examined the neuroanatomical correlates of AP. Here, we used MRI and diffusion tensor imaging to investigate structural differences in brains of musicians with and without AP, by means of whole-brain vertex-wise cortical thickness (CT) analysis and tract-based spatial statistics (TBSS) analysis. APs displayed increased CT in a number of areas including the bilateral superior temporal gyrus (STG), the left inferior frontal gyrus, and the right supramarginal gyrus. Furthermore, we found higher fractional anisotropy in APs within the path of the inferior fronto-occipital fasciculus, the uncinate fasciculus, and the inferior longitudinal fasciculus. The findings in gray matter support previous studies indicating an increased left lateralized posterior STG in APs, yet they differ from previous findings of thinner cortex for a number of areas in APs. Finally, we found a relation between the white-matter results and the CT in the right parahippocampal gyrus. In this study, we present novel findings in AP research that may have implications for the understanding of the neuroanatomical underpinnings of AP ability.

http://cercor.oxfordjournals.org/content/25/5/1379.full?sid=c5a29f5a-5aae-4170-9670-c4c74ebbab42

__________________________________________

Musical Expertise Boosts Implicit Learning of Both Musical and Linguistic Structures

Abstract:

Musical training is known to modify auditory perception and related cortical organization. Here, we show that these modifications may extend to higher cognitive functions and generalize to processing of speech. Previous studies have shown that adults and newborns can segment a continuous stream of linguistic and nonlinguistic stimuli based only on probabilities of occurrence between adjacent syllables or tones. In the present experiment, we used an artificial (sung) language learning design coupled with an electrophysiological approach. While behavioral results were not clear cut in showing an effect of expertise, Event-Related Potentials data showed that musicians learned better than did nonmusicians both musical and linguistic structures of the sung language. We discuss these findings in terms of practice-related changes in auditory processing, stream segmentation, and memory processes.

Francois, C. and Schon, D. (2011). Musical Expertise Boosts Implicit Learning of Both Musical and Linguistic Structures. Cerebral Cortex, 21(10), pp.2357-2365.

__________________________________________

Words in Context: The Effects of Length, Frequency, and Predictability on Brain Responses During Natural Reading

Abstract:

Word length, frequency, and predictability count among the most influential variables during reading. Their effects are well-documented in eye movement studies, but pertinent evidence from neuroimaging primarily stem from single-word presentations. We investigated the effects of these variables during reading of whole sentences with simultaneous eye-tracking and functional magnetic resonance imaging (fixation-related fMRI). Increasing word length was associated with increasing activation in occipital areas linked to visual analysis. Additionally, length elicited a U-shaped modulation (i.e., least activation for medium-length words) within a brain stem region presumably linked to eye movement control. These effects, however, were diminished when accounting for multiple fixation cases. Increasing frequency was associated with decreasing activation within left inferior frontal, superior parietal, and occipito-temporal regions. The function of the latter region—hosting the putative visual word form area—was originally considered as limited to sublexical processing. An exploratory analysis revealed that increasing predictability was associated with decreasing activation within middle temporal and inferior frontal regions previously implicated in memory access and unification. The findings are discussed with regard to their correspondence with findings from single-word presentations and with regard to neurocognitive models of visual word recognition, semantic processing, and eye movement control during reading.

Schuster, S., Hawelka, S., Hutzler, F., Kronbichler, M. and Richlan, F. (2016). Words in Context: The Effects of Length, Frequency, and Predictability on Brain Responses During Natural Reading. Cerebral Cortex, 26(10), pp.3889.2-3904.

__________________________________________

https://twitter.com/ArtHistoryFeed/status/984507387255148544

_________________________________________

Craniofacial genetics: Where have we been and where are we going?

Looking at faces is always illuminating. Perhaps, this is because our faces reveal so much about us, ranging from our evolutionary history to our embryological development, genetic endowment, propensity for disease, current health status, and exposures over our lifespan. The structure of our faces may even reveal insights into our personalities—an idea that stretches back to the ancient Greeks. The face is a complex constellation of parts serving functions as diverse as sight, hearing, smell, breathing, nourishment and digestion, protection, and communication. Despite our collective fascination, we still have limited understanding of the molecular machinery that controls how our faces form or how morphological variation in facial features arises, from the typical and often subtle differences that endow each of us with our unique facial appearance to the rare craniofacial malformations seen in the clinic. However, we are making incredible progress in these areas, and the pace of discovery is poised to accelerate rapidly, facilitated by the emergence of high-throughput experimental methods, advances in computational modeling, and the investment and availability of large-scale craniofacial data resources, (e.g., the FaceBase Consortium).

Weinberg, S., Cornell, R. and Leslie, E. (2018). Craniofacial genetics: Where have we been and where are we going?. PLOS Genetics, 14(6), p.e1007438.

_________________________________________

https://twitter.com/DannyDPurb/status/875543209086156800

_________________________________________

_________________________________________

Vision keeps maturing until mid-life: Brain research recasts timeline for visual cortex development

The visual cortex, the human brain’s vision-processing centre that was previously thought to mature and stabilize in the first few years of life, actually continues to develop until sometime in the late 30s or early 40s, a McMaster neuroscientist and her colleagues have found. Kathryn Murphy, a professor in McMaster’s department of Psychology, Neuroscience and Behaviour, led the study using post-mortem brain-tissue samples from 30 people ranging in age from 20 days to 80 years.

Her analysis of proteins that drive the actions of neurons in the visual cortex at the back of the brain recasts previous understanding of when that part of the brain reaches maturity, extending the timeline until about age 36, plus or minus 4.5 years.

The finding was a surprise to Murphy and her colleagues, who had expected to find that the cortex reached its mature stage by 5 to 6 years, consistent with previous results from animal samples and with prevailing scientific and medical belief.

“There’s a big gap in our understanding of how our brains function,” says Murphy. “Our idea of sensory areas developing in childhood and then being static is part of the challenge. It’s not correct.”

The research appears May 29 in The Journal of Neuroscience.

Murphy says treatment for conditions such as amblyopia or “lazy eye”, for example, have been based on the idea that only children could benefit from corrective therapies, since it was thought that treating young adults would be pointless because they had passed the age when their brains could respond.

Though the research is isolated to the visual cortex, it suggests that other areas of the brain may also be much more plastic for much longer than previously thought, Murphy says.

Siu, C., Beshara, S., Jones, D. and Murphy, K. (2017). Development of glutamatergic proteins in human visual cortex across the lifespan. The Journal of Neuroscience, pp.2304-16.

_________________________________________

Experimental evidence that primate trichromacy is well suited for detecting primate social colour signals

Extract:

Primate trichromatic colour vision has been hypothesized to be well tuned for detecting variation in facial coloration, which could be due to selection on either signal wavelengths or the sensitivities of the photoreceptors themselves.

We provide one of the first empirical tests of this idea by asking whether, when compared with other visual systems, the information obtained through primate trichromatic vision confers an improved ability to detect the changes in facial colour that female macaque monkeys exhibit when they are proceptive.

We presented pairs of digital images of faces of the same monkey to human observers and asked them to select the proceptive face. We tested images that simulated what would be seen by common catarrhine trichromatic vision, two additional trichromatic conditions and three dichromatic conditions. Performance under conditions of common catarrhine trichromacy, and trichromacy with narrowly separated LM cone pigments (common in female platyrrhines), was better than for evenly spaced trichromacy or for any of the dichromatic conditions.

These results suggest that primate trichromatic colour vision confers excellent ability to detect meaningful variation in primate face colour. This is consistent with the hypothesis that social information detection has acted on either primate signal spectral reflectance or photoreceptor spectral tuning, or both.

Hiramatsu, C., Melin, A., Allen, W., Dubuc, C. and Higham, J. (2017). Experimental evidence that primate trichromacy is well suited for detecting primate social colour signals. Proceedings of the Royal Society B: Biological Sciences, 284(1856), p.20162458.

_________________________________________

https://twitter.com/arthistoryfeed/status/1004802143092604933

________________________________________

Why People With Autism Tend to Avoid Eye Contact

Abstract:

Individuals with Autism Spectrum Disorder (ASD) seem to have difficulties looking others in the eyes, but the substrate for this behavior is not well understood. The subcortical pathway, which consists of superior colliculus, pulvinar nucleus of the thalamus, and amygdala, enables rapid and automatic face processing. A specific component of this pathway – i.e., the amygdala – has been shown to be abnormally activated in paradigms where individuals had to specifically attend to the eye-region; however, a direct examination of the effect of manipulating the gaze to the eye-regions on all the components of the subcortical system altogether has never been performed. The subcortical system is particularly important as it shapes the functional specialization of the face-processing cortex during development. Using functional MRI, we investigated the effect of constraining gaze in the eye-region during dynamic emotional face perception in groups of participants with ASD and typical controls. We computed differences in activation in the subcortical face processing system (superior colliculus, pulvinar nucleus of the thalamus and amygdala) for the same stimuli seen freely or with the gaze constrained in the eye-region. Our results show that when constrained to look in the eyes, individuals with ASD show abnormally high activation in the subcortical system, which may be at the basis of their eye avoidance in daily life.

Hadjikhani, N., Åsberg Johnels, J., Zürcher, N., Lassalle, A., Guillon, Q., Hippolyte, L., Billstedt, E., Ward, N., Lemonnier, E. and Gillberg, C. (2017). Look me in the eyes: constraining gaze in the eye-region provokes abnormally high subcortical activation in autism. Scientific Reports, 7(1).

_______________________________

La vie secrète des chansons: Martial Tricoche du groupe Manau, dans l’émission en 2018

_______________________________

_______________________________

L’ile Maurice/Mauritius | The “underground” yet world famous band, A Riot in Heaven, influenced by intricate rock themed musical arrangements and literary lyrics gives us a perfect example of artistic assimilation and Franco-British cultural synchronisation in the Western/English and French speaking world

More here: “A Riot in Heaven’s Other Albums”

_____________________________________

“Beautiful” by Marshall Bruce Mathers III AKA Eminem // Album: Relapse (2009)

[Age Notice / 21+ : The artistic contents and package contain lyrics, opinions and poetry that may offend the more sensitive ones among us. Please bear in mind that this website takes no responsibility for any moral damage that may be caused by the entertainment product which is highly recommended for a mature & “sensible” audience.]

Vincent Vinel – Lose Yourself

_____________________________________

A generation of music artists reflect on the thinker, lyricist, vocalist & instrumentalist, Kurt D. Cobain

Extract:

-Ryan Hayes // Vocalist

“I will never forget, as a kid, reading the liner notes of Incesticide (1992), where Kurt told his own fans that if they hated homosexuals, or people of different color, or women, to f*** off and stop buying Nirvana records. Seeing someone with that much fame be so unapologetic and true to his own beliefs, regardless of how it could potentially affect record sales or his popularity, was life changing for me…”

-Phil Leavitt // Singer/Drummer “Kurt was an inspiration to so many different people. He had a way to connect with kids who didn’t fit in with society or felt lost. Nirvana was an outlet for that. Very real and that is why his music will live on forever…

…”

Full Article: http://crypticrock.com/reflecting-on-kurt-cobain-and-nirvana-20-years-later-2/

_____________________________________

Science sans conscience égale science de l’inconscience… On envoie des pigeons défendre la colombe, qui avancent comme des pions défendre des bombes…”-MC Solaar

[Translation: Science without conscience is equal to the science of the unconscious… We send pigeons in defence of the dove, who advance like tokens defending bombs… – MC Solaar]

_____________________________________

The Role of Corticostriatal Systems in Speech Category Learning

Abstract:

One of the most difficult category learning problems for humans is learning nonnative speech categories. While feedback-based category training can enhance speech learning, the mechanisms underlying these benefits are unclear. In this functional magnetic resonance imaging study, we investigated neural and computational mechanisms underlying feedback-dependent speech category learning in adults. Positive feedback activated a large corticostriatal network including the dorsolateral prefrontal cortex, inferior parietal lobule, middle temporal gyrus, caudate, putamen, and the ventral striatum. Successful learning was contingent upon the activity of domain-general category learning systems: the fast-learning reflective system, involving the dorsolateral prefrontal cortex that develops and tests explicit rules based on the feedback content, and the slow-learning reflexive system, involving the putamen in which the stimuli are implicitly associated with category responses based on the reward value in feedback. Computational modeling of response strategies revealed significant use of reflective strategies early in training and greater use of reflexive strategies later in training. Reflexive strategy use was associated with increased activation in the putamen. Our results demonstrate a critical role for the reflexive corticostriatal learning system as a function of response strategy and proficiency during speech category learning.

Yi, H., Maddox, W., Mumford, J. and Chandrasekaran, B. (2014). The Role of Corticostriatal Systems in Speech Category Learning. Cerebral Cortex, 26(4), pp.1409-1420.

_____________________________________

Language Control in Bilinguals: Monitoring and Response Selection

Abstract:

Language control refers to the cognitive mechanism that allows bilinguals to correctly speak in one language avoiding interference from the nontarget language. Bilinguals achieve this feat by engaging brain areas closely related to cognitive control. However, 2 questions still await resolution: whether this network is differently engaged when controlling nonlinguistic representations, and whether this network is differently engaged when control is exerted upon a restricted set of lexical representations that were previously used (i.e., local control) as opposed to control of the entire language system (i.e., global control). In the present event-related functional magnetic resonance imaging study, we investigated these 2 questions by employing linguistic and nonlinguistic blocked switching tasks in the same bilingual participants. We first report that the left prefrontal cortex is driven similarly for control of linguistic and nonlinguistic representations, suggesting its domain-general role in the implementation of response selection. Second, we propose that language control in bilinguals is hierarchically organized with the dorsal anterior cingulate cortex/presupplementary motor area acting as the supervisory attentional system, recruited for increased monitoring demands such as local control in the second language. On the other hand, prefrontal, inferior parietal areas and the caudate would act as the response selection system, tailored for language selection for both local and global control.

Branzi, F., Della Rosa, P., Canini, M., Costa, A. and Abutalebi, J. (2015). Language Control in Bilinguals: Monitoring and Response Selection. Cerebral Cortex, 26(6), pp.2367-2380.

__________________________________

https://twitter.com/artistdegas/status/933396539602587649

__________________________________

Listening to classical music modulates genes that are responsible for brain functions

A Finnish study group has investigated how listening to classical music affected the gene expression profiles of both musically experienced and inexperienced participants. All the participants listened to W.A. Mozart’s violin concert Nr 3, G-major, K.216 that lasts 20 minutes.

Listening to music enhanced the activity of genes involved in dopamine secretion and transport, synaptic function, learning and memory. One of the most up-regulated genes, synuclein-alpha (SNCA) is a known risk gene for Parkinson’s disease that is located in the strongest linkage region of musical aptitude. SNCA is also known to contribute to song learning in songbirds.

“The up-regulation of several genes that are known to be responsible for song learning and singing in songbirds suggest a shared evolutionary background of sound perception between vocalizing birds and humans”, says Dr. Irma Järvelä, the leader of the study.

In contrast, listening to music down-regulated genes that are associated with neurodegeneration, referring to a neuroprotective role of music.

“The effect was only detectable in musically experienced participants, suggesting the importance of familiarity and experience in mediating music-induced effects”, researchers remark.

The findings give new information about the molecular genetic background of music perception and evolution, and may give further insights about the molecular mechanisms underlying music therapy.

Kanduri, C., Raijas, P., Ahvenainen, M., Philips, A., Ukkola-Vuoti, L., Lähdesmäki, H. and Järvelä, I. (2015). The effect of listening to music on human transcriptome. PeerJ, 3, p.e830.

__________________________________

__________________________________

Study of healthy adults finds that 2 types of extroverts have more brain matter than most common brains

#Society #Neuroscience #Evolution #Science #Mind

DOI:10.3758/s13415-014-0331-6

http://goo.gl/7J8Rxj

__________________________________

__________________________________

Why Kids With High IQs Are More Likely to Take Drugs

People with high IQs are more likely to smoke marijuana and take other illegal drugs, compared with those who score lower on intelligence tests, according to a new study from the U.K.

“It’s counterintuitive,” says lead author James White of the Center for the Development and Evaluation of Complex Interventions for Public Health Improvement at Cardiff University in Wales. “It’s not what we thought we would find.”

The IQ effect was larger in women: women in the top third of the IQ range at age 5 were more than twice as likely to have taken marijuana or cocaine by age 30, compared with those scoring in the bottom third. The men with the highest IQs were nearly 50% more likely to have taken amphetamines and 65% more likely to have taken ecstasy, compared to those with lower scores.

And these results held even when researchers controlled for factors like socioeconomic status and psychological distress, which are also correlated with rates of drug use.

So why might smarter kids be more likely to try drugs? “People with high IQs are more likely to score high on personality scales of openness to experience,” says White. “They may be more willing to experiment and seek out novel experiences.”

That could potentially be linked to the boredom and social isolation experienced by many gifted children, the authors note. But since a link between IQ and drug use remains independent of psychological distress, that can’t be all that’s going on. “It rules out the argument that the only reason people take illegal drugs is to self medicate,” says White.

White, J. and Batty, G. (2011). Intelligence across childhood in relation to illegal drug use in adulthood: 1970 British Cohort Study. Journal of Epidemiology & Community Health, 66(9), pp.767-774.

__________________________________

Temporal Dynamics of the Default Mode Network Characterize Meditation-Induced Alterations in Consciousness

Extract:

“In this study, we analyzed the DMN microstate to understand the mechanisms of meditation-induced alterations in consciousness. By contrasting healthy controls (HCs) at rest against expert meditators at rest and during meditation, we explored both state and trait changes in DMN-microstate dynamics produced by meditation with a hypothesis that these could cause differential alterations in its duration and frequency. The state changes felt during meditation are usually described as a deep sense of calm peacefulness, cessation or slowing of mind’s internal dialog and conscious awareness merging completely with the object of meditation (Brown, 1977; Wallace, 1999). Alongside, long-term expertise in meditation also produces durable changes in neural dynamics, with improvements in mental and physical health presumably due to its trait effects (Chiesa and Serretti, 2010, 2011; Hofmann et al., 2010). Here, we describe changes in the spatial configuration of the DMN as a function of meditation, and show that state and trait influences on the temporal dynamics of the DMN microstate can indeed be dissociated…

…this reflected a trait effect of meditation, highlighting its role in producing durable changes in temporal dynamics of the DMN. Taken together, these findings shed new light on short and long-term consequences of meditation practice on this key brain network.

Panda, R., Bharath, R., Upadhyay, N., Mangalore, S., Chennu, S. and Rao, S. (2016). Temporal Dynamics of the Default Mode Network Characterize Meditation-Induced Alterations in Consciousness. Frontiers in Human Neuroscience, 10.

__________________________________

Mindfulness practice leads to increases in regional brain gray matter density

Therapeutic interventions that incorporate training in mindfulness meditation have become increasingly popular, but to date, little is known about neural mechanisms associated with these interventions. Mindfulness-Based Stress Reduction (MBSR), one of the most widely used mindfulness training programs, has been reported to produce positive effects on psychological well-being and to ameliorate symptoms of a number of disorders.

Here, we report a controlled longitudinal study to investigate pre-post changes in brain gray matter concentration attributable to participation in an MBSR program. Anatomical MRI images from sixteen healthy, meditation-naïve participants were obtained before and after they underwent the eight-week program.

Changes in gray matter concentration were investigated using voxel-based morphometry, and compared to a wait-list control group of 17 individuals. Analyses in a priori regions of interest confirmed increases in gray matter concentration within the left hippocampus. Whole brain analyses identified increases in the posterior cingulate cortex, the temporo-parietal junction, and the cerebellum in the MBSR group compared to the controls. The results suggest that participation in MBSR is associated with changes in gray matter concentration in brain regions involved in learning and memory processes, emotion regulation, self-referential processing, and perspective taking.

Hölzel, B., Carmody, J., Vangel, M., Congleton, C., Yerramsetti, S., Gard, T. and Lazar, S. (2011). Mindfulness practice leads to increases in regional brain gray matter density. Psychiatry Research: Neuroimaging, 191(1), pp.36-43.

__________________________________

#Meditators hv #brains that are physically 7 yrs younger, on average, than non-meditators

http://digest.bps.org.uk/2016/04/experienced-meditators-have-brains-that.html

Applying a social learning theoretical framework to music therapy as a prevention and intervention for bullies and victims of bullying